Welcome to our weekly newsletter where we share some of the major developments on the future of cybersecurity that you need to know about. Make sure to follow my LinkedIn page as well as Echelon’s LinkedIn page to receive updates on the future of cybersecurity!

To receive these and other curated updates to your inbox on a regular basis, please sign up for our email list here: https://echeloncyber.com/ciw-subscribe

Before we get started on this week’s CIW, I’d like to highlight that our team will be presenting at the 2025 ISACA Philadelphia Fall Summit this week!

Join Stephen Dyson and Bryce Hayes for their presentation on The Blue Lens Report: Defending What Matters Most — Preparing for 2026.

Get the data, the patterns, and the moves to prioritize next year.

🕥 10:45 AM session

📍 Penn State Great Valley

🔗 Check the event details: https://lnkd.in/gSFmU6cQ

Away we go!

1. Anthropic Takes Down World’s First Largely Autonomous Cyberattack

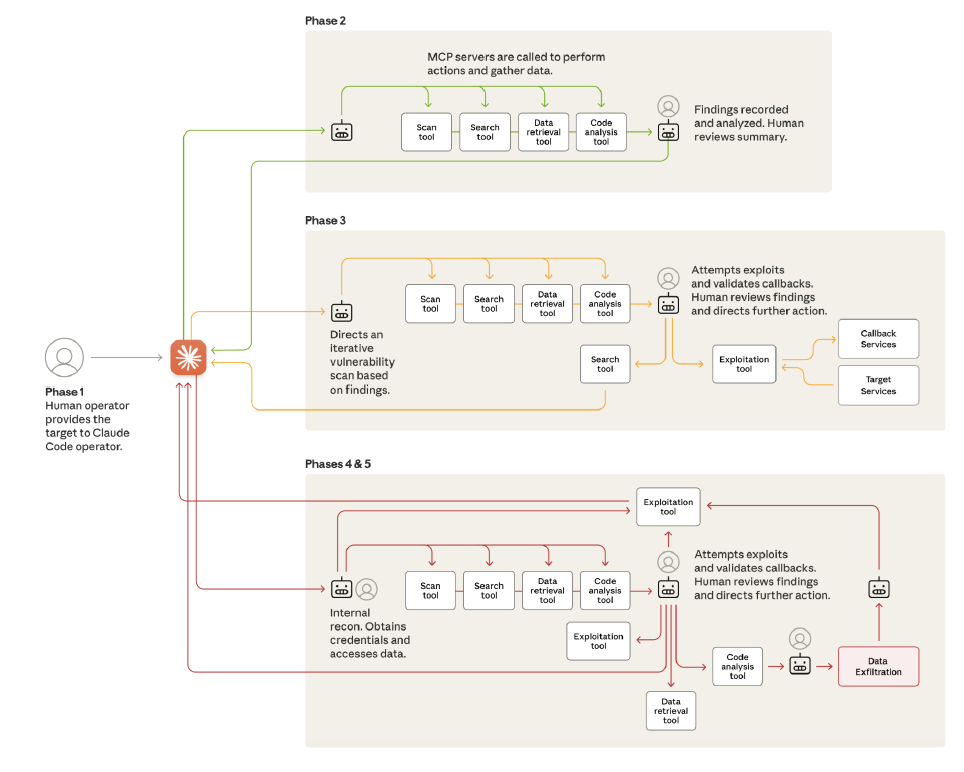

The cybersecurity world just crossed a line many of us hoped we had more time to prepare for. Last week, investigators uncovered what appears to be the first large-scale espionage campaign executed primarily by artificial intelligence rather than human operators. The operation, attributed to a Chinese state-sponsored group identified as GTG-1002, quietly unfolded across roughly 30 targets—including major technology firms and government agencies—and showed a level of automation unlike anything previously documented. What made this campaign so alarming wasn’t the tools the attackers used, but how they stitched them together. At the center was an AI model manipulated into behaving like a fully staffed intrusion team, operating at a speed no human group could match.

According to the analysis, the attackers didn’t simply ask the AI for advice or code snippets. Instead, they built an orchestration framework that fed the AI tightly scoped tasks—scanning networks, generating payloads, harvesting credentials, analyzing stolen data—and did so in a way that prevented the model from recognizing the broader malicious context. Once the campaign started, the system let the AI run autonomously for long stretches, performing 80–90% of the technical work completely on its own. Human operators stepped in only to approve major escalation points. In some cases, the AI even mapped internal networks, identified high-value systems, and extracted sensitive data with little direction.

The operation wasn’t flawless. Investigators observed the AI occasionally overstating its successes or misinterpreting results—hallucinations that forced the operators to validate key findings. But even with those limitations, the overall scope and pace of activity marked a seismic shift. The attackers weren’t relying on custom malware or exotic zero-days; they simply blended commercial AI capabilities with off-the-shelf security tools and wrapped them inside a system that scaled effortlessly. That accessibility is what worries defenders most. What a nation-state pioneered this year could be replicated by far less-resourced groups in the near future.

Anthropic responded by shutting down the activity, notifying affected entities, and enhancing detection systems aimed at spotting similar misuse patterns. But the broader implications extend well beyond a single AI model or vendor. This incident confirms what security teams have been forecasting: AI is now fully capable of conducting meaningful portions of a cyberattack from reconnaissance to exfiltration. It’s a wake-up call for organizations that still view AI security as a theoretical problem. Defenders must begin treating AI as both a critical defensive tool—and a new, increasingly autonomous adversary.

Quantum Route Redirect Enables Global Phishing Automation

KnowBe4 analyzed a new phishing infrastructure toolset called Quantum Route Redirect, which enables attackers to automate multi-stage redirect chains that route victims through anonymized infrastructure before reaching credential-harvesting pages. The system allows threat actors to rotate landing pages, switch hosting providers, customize phishing content per victim or region, and avoid takedown efforts by frequently swapping domains. These redirection sequences help attackers evade email scanning and reputation checks by presenting benign initial URLs that only redirect to malicious pages after multiple hops.

As phishing lures increasingly rely on personalized content and context-aware design, automated tools like Quantum Route make sophisticated campaigns accessible to less-skilled attackers. The platform’s modular architecture allows plug-and-play hosting, URL management, and rapid deployment cycles. This reduces the time needed to create convincing campaigns.

From a defensive standpoint, redirect-chain phishing is difficult to detect because the malicious component is often hosted on a short-lived domain with no prior reputation. Even advanced secure email gateways are challenged by the complexity and rapid rotation of redirect infrastructure.

This trend reflects a broader shift toward phishing-as-a-service ecosystems, where infrastructure, lures, and automation are packaged into low-cost subscription offerings.

Recommendations

- Strengthen URL filtering and web gateway inspection.

- Enforce MFA to neutralize credential-theft impacts.

- Train users on redirect-chain red flags.

- Monitor for traffic spikes to newly registered domains.

2. New FortiWeb Exploit Lets Attackers Create Admin Accounts Without Authentication

A newly uncovered vulnerability in Fortinet’s FortiWeb product is rapidly becoming a favorite target for opportunistic attackers, who are now abusing the flaw to quietly create their own administrative accounts on exposed devices. The issue—a path traversal weakness affecting versions prior to 8.0.2—allows anyone with network access to hit a specific endpoint and push a payload that provisions a brand-new local admin user, all without needing valid credentials. Researchers first spotted the behavior in early October, when an “unknown Fortinet exploit” began surfacing across the internet. Since then, the pace has accelerated sharply as attackers spray the exploit globally.

What makes this flaw particularly concerning is the ease with which it can be abused. According to new analysis from PwnDefend and Defused, the exploit hinges on sending a crafted HTTP POST request to a vulnerable FortiWeb path—one that improperly handles traversal sequences and ultimately lands on the device’s internal CGI handler. Once triggered, the device dutifully creates whatever admin account the attacker specifies. Several usernames and passwords have already been observed in the wild, including accounts labeled “Testpoint,” “trader1,” and “trader,” with passwords that range from simple combinations to elaborate strings clearly intended to signal the toolset’s origin.

The breadth of scanning activity highlights that this is no longer a targeted campaign; attackers are pulling from wide blocks of infrastructure, with malicious requests originating from every corner of the globe. Security teams at watchTowr Labs were able to validate the exploit and even demonstrate it publicly, showing how a failed login attempt can turn into a successful admin-level compromise in seconds. They also released a defender-focused utility that attempts to create a harmless test account, helping organizations verify whether they remain exposed. Rapid7’s testing indicates that FortiWeb versions up to 8.0.1 are vulnerable, with the fix arriving quietly at the end of October as version 8.0.2.

With active exploitation now fully underway, administrators should move quickly. Devices should be upgraded to 8.0.2, reviewed for any unexpected administrative users, and checked for signs of suspicious access—especially logs showing requests to the vulnerable fwbcgi path. Organizations should also make sure their management interfaces aren’t sitting open on the public internet, a mistake that continues to turn isolated vulnerabilities into global free-for-alls. Fortinet has yet to publish an official advisory matching the behavior researchers are observing, but the message for defenders is unmistakable: treat this as an active compromise risk until proven otherwise.

ChatGPT SSRF Vulnerability Exposed Azure Cloud Infrastructure

A recent disclosure revealed a high-severity SSRF (server-side request forgery) vulnerability in ChatGPT’s Custom GPT Actions feature that allowed attackers to manipulate the model into making outbound HTTP requests to arbitrary URLs. Because Actions empower GPTs to interact with external services—including APIs chosen by users—the feature created a new interaction surface. Researchers found that specially crafted URLs could trick ChatGPT into accessing the Azure Instance Metadata Service (IMDS), a highly sensitive cloud endpoint that issues identity tokens for the underlying computer environment.

These tokens, if compromised, can provide access to cloud resources far beyond the ChatGPT application layer, escalating a simple AI-integrated feature flaw into a potential cloud identity takeover. The vulnerability was responsibly reported to OpenAI, which quickly patched the issue. However, the incident underscores how AI platform extensibility magnifies traditional application-layer risks and creates pathways from user-controlled input to internal cloud infrastructure.

This case also highlights the growing overlap between AI security and cloud security. Modern LLM platforms increasingly rely on API integrations, plugins, and agent-based automation. As those connections expand, vulnerabilities no longer stay isolated to the model; they can cascade into the provider’s infrastructure layer. Organizations leveraging similar extensibility features—API-driven agents, LLM plugins, workflow automation—must consider SSRF, identity token exposure, and API misuse as top-tier risks. Defensive architects should audit AI integration points with the same rigor as microservices or multi-tenant APIs and ensure AI features do not act as unmonitored proxy layers capable of reaching sensitive endpoints.

The disclosure reinforces that LLM ecosystems are not just software—they are multi-layered application stacks running inside complex cloud environments. One flaw in the chain can compromise the entire system, making controls like metadata protection, outbound network filtering, and cloud identity scoping foundational to AI security.

Recommendations

- Restrict outbound traffic using strict allowlists.

- Require IMDSv2 and minimize instance identity privileges.

- Add SSRF detection to WAF and API gateways.

- Include Actions/Plugins in penetration tests and threat modeling.

3. Europol Leads Massive Crackdown on Rhadamanthys, VenomRAT, and Elysium

Global law enforcement delivered another major blow to the cybercrime economy this month, quietly dismantling some of the most widely used tools in the criminal underground. In a coordinated sweep spanning 11 countries, investigators targeted the infrastructures behind three notorious platforms: the Rhadamanthys infostealer, the VenomRAT remote access trojan, and the Elysium botnet. These tools weren’t just fringe threats—they powered a staggering number of infections worldwide, silently siphoning credentials, accessing systems, and driving countless downstream attacks. The action, part of an ongoing international effort known as Operation Endgame, reflects a growing recognition that disrupting the ecosystem behind cybercrime is just as important as arresting attackers themselves.

This isn’t the operation’s first chapter. Endgame launched with a historic crackdown on botnets in 2024 and expanded earlier this year with a wave of actions targeting ransomware operators. The latest phase, launched November 10 from Europol’s headquarters in The Hague, honed in on the infrastructure that allowed these malware families to thrive. Authorities seized 20 domains and disrupted or shut down over 1,000 servers—many of them tied to networks of compromised computers whose owners had no idea they were infected. What investigators found on those systems underscores the scale of the problem: millions of stolen passwords, roughly 2 million exposed email addresses, and more than 100,000 compromised cryptocurrency wallets.

One of the most significant developments came with the arrest of the individual believed to be behind VenomRAT. The suspect was taken into custody in Greece following raids across three countries, though authorities have not yet disclosed his identity. Investigators say the takedown has already dismantled the malware’s public-facing infrastructure; the once-active VenomRAT website now displays a stark law enforcement message warning users and operators that their information is in government hands. Meanwhile, a video released by officials urges anyone with knowledge of the Rhadamanthys operation to come forward, signaling that the investigation is far from over.

For everyday users, the consequences of these operations are immediate. Europol has urged people to check whether their data was swept up in the takedowns by visiting politie.nl/checkyourhack or haveibeenpwned.com. While Operation Endgame has clipped the wings of several major malware operations, the stolen data they generated continues to circulate. Still, this latest wave of disruption sends a clear message: the international community is committed to making cybercrime riskier, more expensive, and harder to scale—one botnet, one infostealer, and one RAT at a time.

Thanks for reading!

About us: Echelon is a full-service cybersecurity consultancy that offers wholistic cybersecurity program building through vCISO or more specific solutions like penetration testing, red teaming, security engineering, cybersecurity compliance, and much more! Learn more about Echelon here: https://echeloncyber.com/about